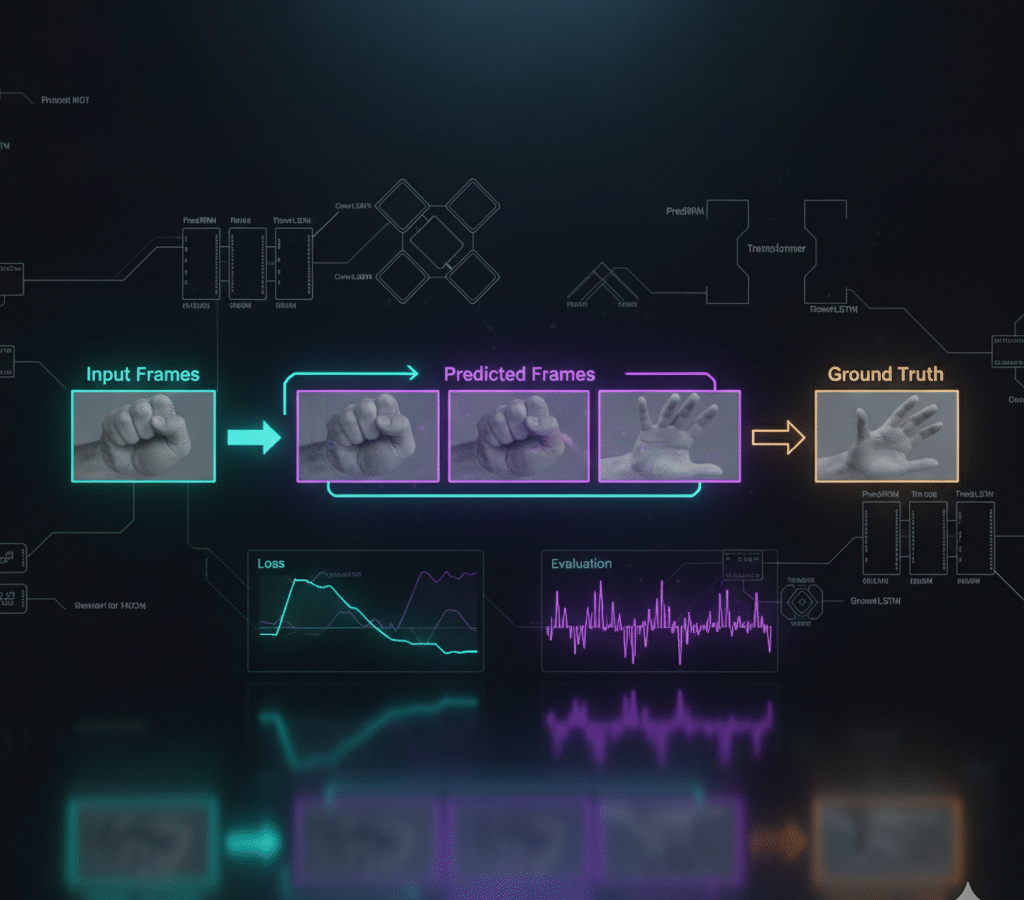

A research-grade pipeline for next-frame video prediction comparing PredRNN, ConvLSTM, and Transformer-based models in PyTorch. Trains on curated 64×64 grayscale sequences with temporal-aware encoders, evaluates with MSE/SSIM, and renders side-by-side predictions vs. ground truth while automating training, checkpointing, and benchmarking.

The platforms offer features such:

- PredRNN, ConvLSTM, and Transformer variants (attention/3D-conv baselines).

- Sequence dataset tooling: preprocessing to frame tensors, caching, augmentation.

- Temporal encoders/decoders (ConvLSTM stacks, 3D conv blocks, attention) with scheduled sampling.

- Config-driven experiments (YAML/Hydra), AMP mixed precision, grad clipping, early stopping.

- Metrics & reports: MSE, SSIM, PSNR; per-epoch tables and aggregate leaderboards.

- Visualization: grids and MP4/GIF exports comparing inputs, predictions, ground truth; error heatmaps.

- Experiment tracking: TensorBoard/MLflow scalars, artifacts, and model registry.

- Reproducible runs: deterministic seeds, Dockerfile, GPU/CPU toggles, resume-from-checkpoint.

- Inference CLI for single clips/batch eval; optional ONNX/TorchScript export.

- Ablations: temporal depth, hidden sizes, kernel sizes, and loss variants (MSE, Charbonnier, perceptual).

This research pipelines typically include baseline models (PredRNN, ConvLSTM, Transformer), curated sequence datasets, and metrics like MSE/SSIM/PSNR with side-by-side prediction visuals.

Tags: Application