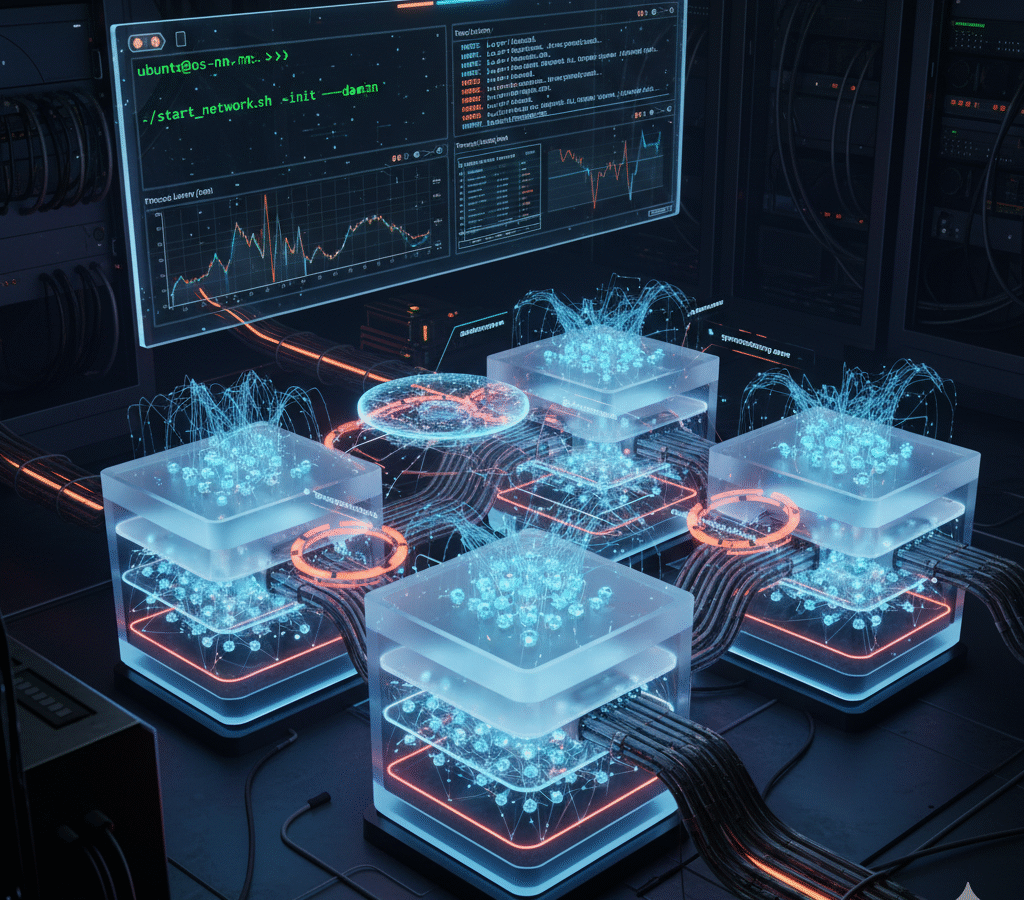

An OS-driven neural network implementation in C++ (Linux/Ubuntu) where each layer runs as a separate process and each neuron executes as a pthread. Processes exchange activations, weights, and biases via pipes (named/unnamed); mini-batches flow forward through the pipeline, while backpropagation sends error signals backward using pipes or shared memory. Synchronization with mutexes/semaphores, careful scheduling, and memory management enables parallel training on multi-core CPUs with safe, reproducible runs.

The project offers features such:

- Process-per-layer architecture with pipe-based IPC for activations/weights/biases; optional shared memory for gradient exchange during backprop.

- Thread-per-neuron using pthreads, plus mutexes, semaphores, and barriers to protect critical sections and synchronize forward/backward phases.

- Mini-batch execution: input partitioning, per-batch buffers, and double-buffered queues to keep cores busy and reduce stalls.

- Backpropagation with gradient accumulation and safe weight/bias updates under locks; configurable learning rate/epochs.

- Scheduling/affinity hints to distribute threads across cores; timing/profiling to measure speedups and contention.

- Memory management: per-process arenas/buffers, copy-safe handoffs, and deterministic cleanup (RAII) to avoid leaks.

- Process control: fork()/exec(), wait()/signal handling, graceful shutdown, and error propagation across layers.

- CLI-configurable topology (layers/neurons/activation), dataset loaders, and logging of loss/accuracy per epoch/batch.

- Validation tools: reference checks, confusion/accuracy metrics, and reproducible seeds.

- Ubuntu-ready build with Makefile, README (run/deploy guide), and screenshots of IPC, synchronization, and process layout.

Implemented a C++/Linux neural network with layer-per-process and neuron-per-thread, using pipes for activations/weights and pipes/shared memory for backprop gradients.

Batch training with fork()/wait(), pthreads, and mutexes/semaphores/barriers ensures safe updates, input buffering, and deterministic synchronization. By distributing work across CPU cores (scheduling/affinity), the system achieves true parallelism and demonstrates OS concepts in practice.