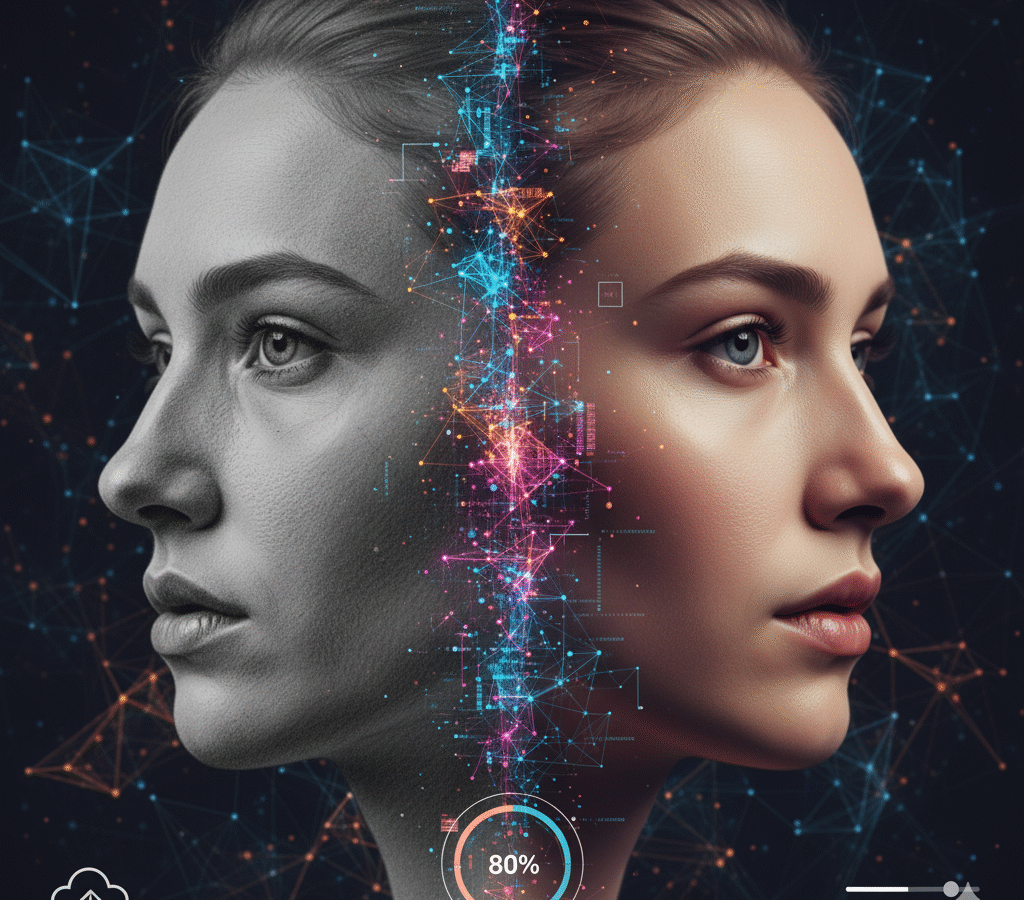

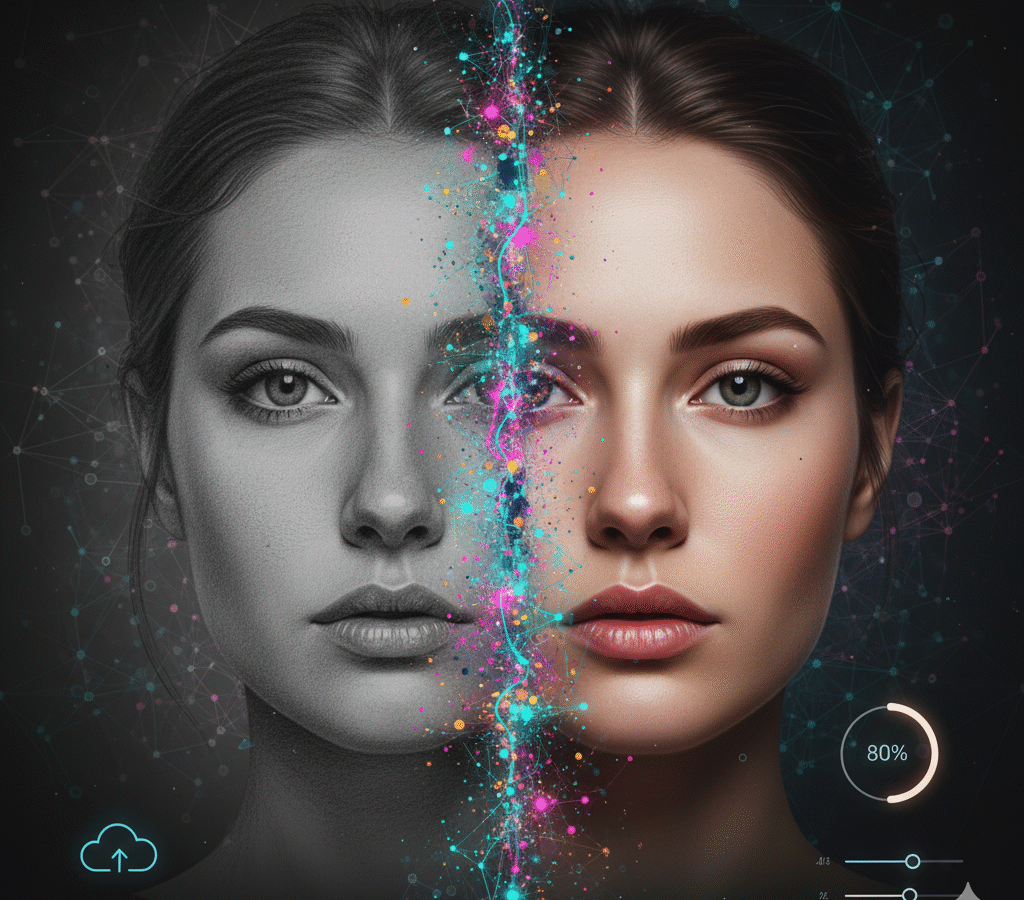

Sketch2Face turns rough facial sketches into photorealistic portraits through a two-stage pipeline. First, a Pix2PixHD conditional GAN maps the line drawing to a clean 256×256 base face. Then Stable Diffusion (img2img/x4 upscaler) enhances resolution and realism while preserving the sketch’s geometry, with optional prompt-based edits (hair, lighting, accessories). The web app pairs a React front end with a Python/PyTorch API (CUDA, mixed precision) packaged in Docker; users upload a sketch, add an optional prompt, and receive a refined image in one flow. Training uses the “Person Face Sketches” dataset with augmentation, checkpoints, and monitoring, and the system includes safety filters/watermarking. Designed for concept art, education, and rapid prototyping—not identity recreation.

- Built a deep learning application that converts hand-drawn facial sketches into realistic human images using a combination of Pix2PixHD and Stable Diffusion models.

- Deployed model inference through Flask and integrated a user-friendly UI for sketch uploads, real-time generation, and image editing via prompt-based modification.

- Optimized GPU inference for rapid result delivery and incorporated checkpoint resuming for long-generation sessions

A React + Python/PyTorch app delivers one-click results with optional prompt edits.